The Great Equalizer: An Exploration of EQ with MeldaProduction’s MTurboEQ

EQ discussions with MeldaProduction's audio software guru.

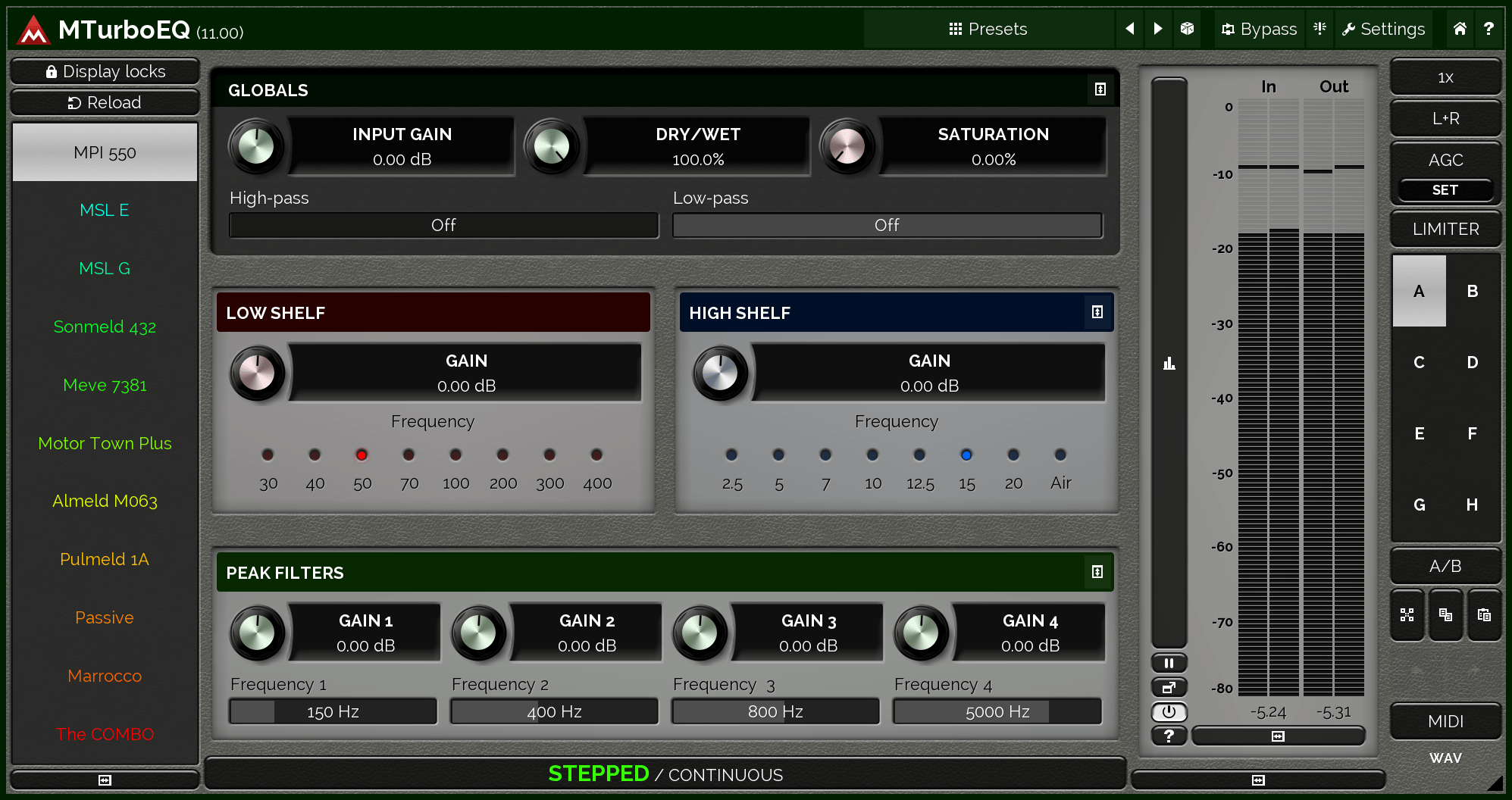

Coming on the heels of its MTurbo series of compression and reverb plugins, MeldaProduction’s MTurboEQ aims to provide the same flexibility as its effects forebears. Once again, the primary focus here is on its range: MTurboEQ includes software reproductions of a variety of classic hardware equalizers, and Melda’s approach is once again to globalize the front panels, allowing users to learn and employ essentially the same user interface and features for all of them.

For nearly all of the included algorithms, users get access to low and high-shelf filters, four peak filters, saturation, dry/wet controls, and automatic gain compensation. There’s support for mono and stereo signals, as well as mid/side encoding for stereo field processing, separate left and right channels, and up to eight channels of surround audio.

We spoke with Melda founder and software designer Vojtech Meluzin about his approach to the EQ—both as a designer and as a user, and about what he’s learned in bringing nearly a dozen classic analog EQs under a single, unified (digital) roof.

What did you try to bring to the world of EQ with this plugin that hasn’t been done before? What sets it apart?

The idea behind MTurboEQ is to put all of the famous EQs into a single interface and to simplify and generalize the GUI for a faster and easier workflow. There’s not much magic in EQs, really—companies often try to exploit the fact that users don’t know that, and sell them overpriced EQs, one by one, with complicated workflows that look like the hardware (which only makes things harder to use). The only “drawback” is that MTurboEQ doesn’t look like the original hardware—which, in our opinion, would just be stupid anyway.

What’s something you think most people don’t understand about the process and art of EQing?

I think most people don’t understand how to use an EQ. With all the possibilities it can be too complex for most people, which is why they tend to use the analog EQs. They are just easier to use—they usually only have a few knobs and not too many options, making it easier to choose the final one—just not necessarily the best one. But if you need stuff done quickly…

The mojo of the analog EQs isn’t in some magic behind them. It’s just that the designers of these super expensive and limited devices needed to use what they had—namely, all the limitations of analog components—and build something that works for most cases well enough. So they designed the EQ curves and frequency/gain interactions nicely. The “stepped” user interface (jumps by 1dB etc) is also useful since, for humans, it’s really hard to compare, say, +10dB and +11dB if the gain control is set to +10.3dB. But in the end, there’s no magic there—it’s about workflow. And if you take the analog EQ/simulation, you can get good results quickly.

A fully featured parametric (and ideally dynamic) EQ can get you much more, but with that power comes responsibility—it’s easy to fuck things up, or spend a week tweaking a single EQ that way.

One thing I think needs to be avoided is using presets. Beginners often search for tutorials, which tell you “use this EQ, and use these settings for vocals.” That’s just stupid—don’t do that. You can use it to learn how to EQ, but every signal is different, and every song context is different; eventually, EQs are actually quite easy to use, but you first need to train your ears, and that’s the work not many are willing to do.

It’s interesting that this software makes use of the GPU (Graphics Processing Unit)—is that something specific to your approach? Or is this kind of processing generally quite GPU-friendly?

MTurboEQ uses GPU acceleration for visualization only. Normally, apps and plugins use the OS services to perform drawing. These are usually pretty advanced, and as such are often performed in software, which of course takes power from the CPU (Central Processing Unit), which we want for audio processing. Instead, we use GPU directly for rendering the graphics, which is especially advantageous since there’s often quite a lot of visualization.

Using GPU for audio processing is also possible, but rarely advantageous because there’s a rather large latency when transferring the data to the GPU and back. And while the actual GPU processors have way more cores than traditional CPUs, individual cores are rather slow. It just doesn’t fit with audio processing concepts, which are generally sequential.

In analyzing old EQ modules, which ones really stood out as having the best, most intuitive designs?

I can’t really say which is best—they just sound different, and we designed the generalized GUI (Graphical User interface) so all of them are easy to use. We made it work in the generalized GUI as well, so the “tricks” people learned over the decades—like turning one knob right and the other left at the same time, for instance — are now available as a simple control, so nobody really needs to know these tricks and can just follow their ears.

The worst originals, though, would be the passive ones, in my opinion. No surprise there—it’s rather difficult to design passive electronic components, which can do little more than just remove some frequencies and lower the volume.

Do you personally prefer using the “stepped” or continuous approach to EQing?

Actually, when it comes to EQing, I like the stepped approach. While it’s a tiny bit limiting, the 1dB doesn’t do enough to mess things up, and it’s much easier for humans to hear the difference and make a final choice on which way to go.

What’s your general approach to EQing? How long do you spend EQing parts in an average production?

I mostly use MAutoDynamicEQ for individual tracks—when you know what you want, you just use the parametric EQ. But I use MTurboEQ on the master, mainly because of the low and high shelves. For quick treatment using shelves and high-pass, when used on individual tracks, I’m usually not so meticulous—under a minute per track, I’d say. I don’t like to “overdo it”—after some time, it only gets worse and worse.